Agents Aren’t People: Why AI Security Requires Workload Identity

AI agents have the potential to significantly transform how enterprises operate. Pairing frontier AI models with tools — giving them the ability to search, write, execute and interact with external systems — has opened up a wealth of opportunities across a broad range of industries. But the same properties that make them powerful also make them dangerous. Hallucinations can cause agents to act on false information; prompt injection attacks can hijack their behaviour; and non-determinism means the same input can produce wildly different outcomes. Until these challenges are addressed, autonomous agents remain a liability as much as an asset.

So what is an agent really?

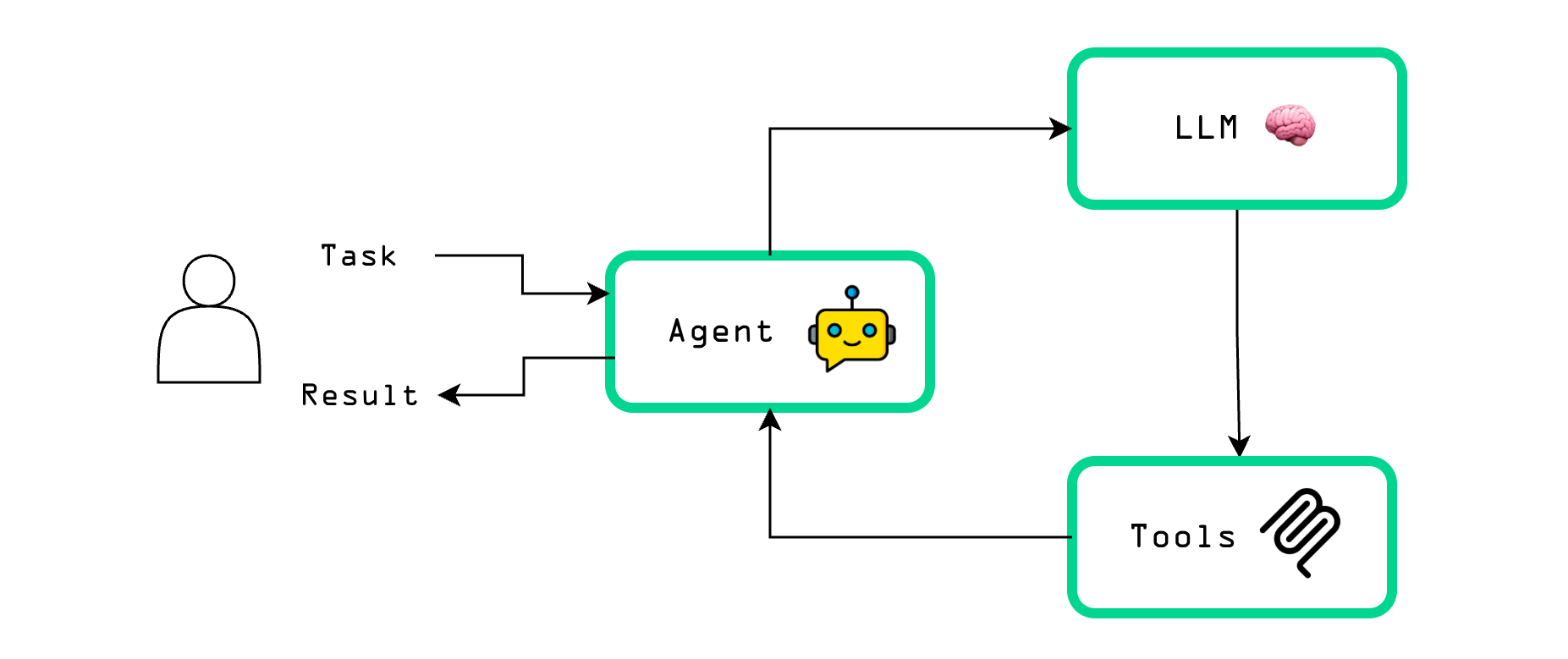

An AI agent is a system where a large language model (LLM) is given a goal and the means to pursue it autonomously. Rather than simply generating a response to a prompt, the agent can take actions, observe results, and iterate — continuing until the goal is achieved or it determines it cannot proceed further.

As Simon Willison puts it, at its core:

an LLM agent runs tools in a loop to achieve a goal

The agent receives an initial request — whether from a user (such as "write me some Python code that does X") or an external trigger (such as an alert from an autonomous monitoring system) — and is equipped with a set of tools that allow it to take action: writing code to a file, executing it to verify it works, or analysing and remediating whatever triggered the alert. This request, along with the available tools, is sent to an LLM, which responds with an instruction to invoke a particular tool. The result of that tool call is then fed back to the LLM, which either takes further action or produces a final response — incorporating what it learned from the tool — back to the user.

In modern agentic AI systems, this LLM → tool use → LLM → response flow can be enhanced by allowing multiple rounds of tool calls (e.g. if the tool result showed the generated Python code doesn’t work, allow the LLM to iterate on the code until it works) or calling multiple tools in parallel. Furthermore, tool calls are not limited to local actions such as writing files and running code – they often involve interacting with external systems; for example, fetching (possibly sensitive) data from another service or searching the web for up to date information on a topic. This external connectivity only makes the security concerns around hallucinations, prompt injection and non-determinism all the more pertinent.

It may be tempting to think that we can simply treat agents as untrusted, inexperienced (human) team members and grant them appropriate user-like permissions; however, this classification leads to various issues further down the line. In particular, agents cannot explain why they took a particular action – the underlying LLM simply generates plausible sounding responses based on the input provided to it, regardless of how person-like those responses may appear. On the other hand, modern agents are capable of advanced deductive reasoning and highly technical problem solving when given access to appropriate tools and context, so shackling agents with minimal permissions may limit their practical utility.

So how should forward-thinking enterprises maximise the value of these powerful new systems without introducing unacceptable security risks into their environment?

Agentic AI security

Two key questions which must be addressed when deploying AI agents in enterprise environments are:

- How can we authenticate agents reliably?

- How can we authorize an agent to use the minimum tool set necessary to achieve its goal safely?

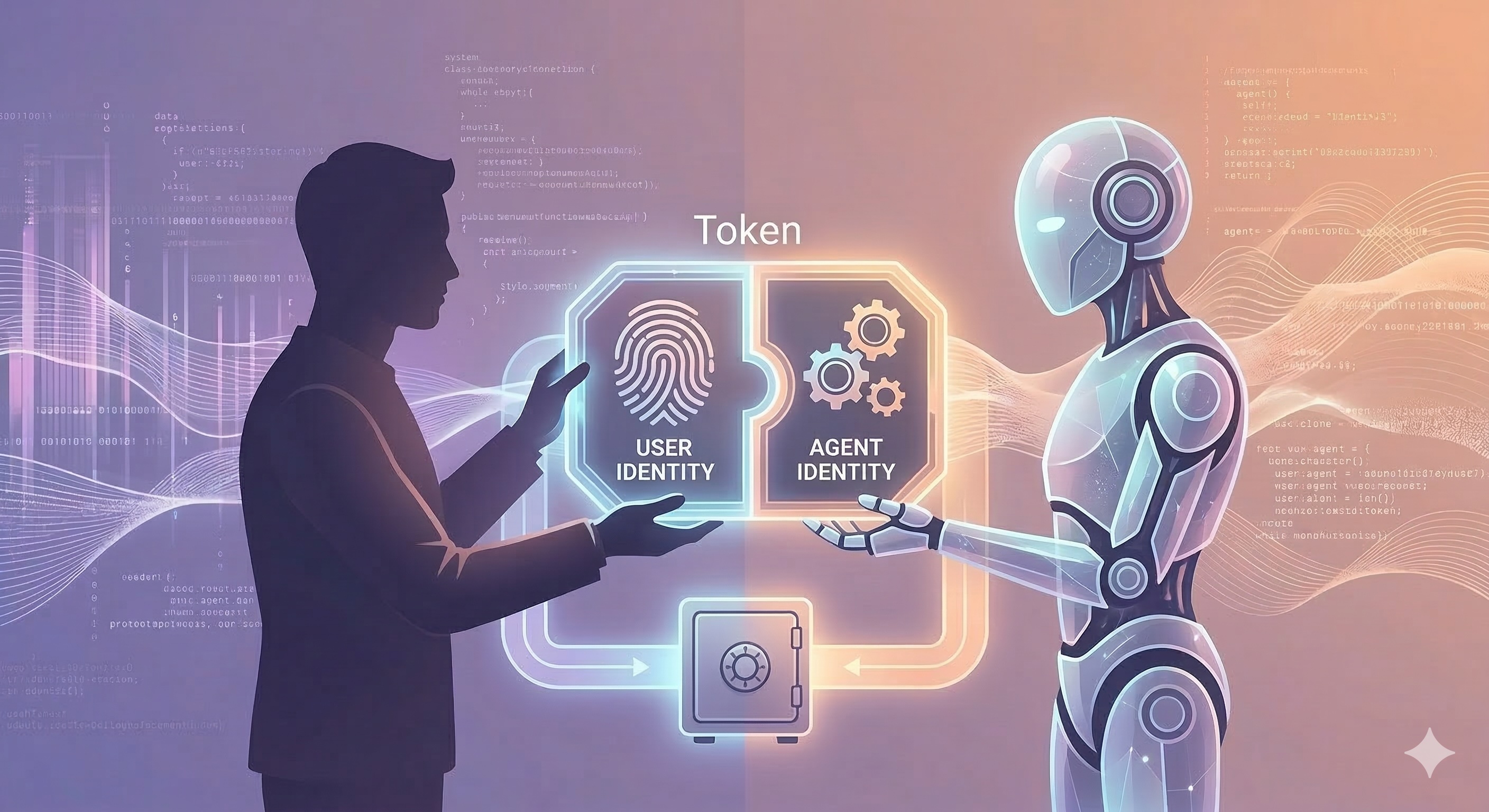

The first is a foundational requirement for establishing robust security policies and clear audit trails, particularly when agents are acting on behalf of users in a semi-autonomous fashion. Any agent must be uniquely identifiable when interacting with other agents and external systems — and critically, its identity must always be bound to that of the human user who is ultimately responsible for its actions. An agent acts as a delegate, not an independent principal.

The second is a core competency of any agentic AI security platform and must include a permissions model which is dynamic enough to allow agents to work effectively and exercise agency, while also remaining manageable and minimising operational friction for the people making the policy decisions. The objective is to grant an agent least privilege access to data from external sources in a secure manner — bounded by three constraints: the access control governing the agent itself; the permissions of the human who triggered it; the original intent of the user's request, ensuring the agent remains scoped to what was actually asked of it rather than pursuing adjacent actions it is technically permitted to take. This ensures that no user can exceed their own access rights simply by routing a request through an agent. Special consideration must also be given to interoperability with existing legacy systems, since this is where the majority of useful data currently resides.

Fortunately, both of these challenges are not unique to AI agents and there is prior art to lean on (and learn from) with existing open standards and open source tooling.

Agentic identity

Despite the buzz around AI agents, at their core, they are simply a new class of workload. They are applications that run as processes, ideally in an isolated execution environment, with a clearly-defined purpose, and communicate with other services using existing protocols such as HTTP.

The gold standard for modern workload identity is the Secure Production Identity Framework For Everyone (SPIFFE). Its robust and flexible design has helped it to become a widely adopted identity standard for forward-thinking technology companies. SPIFFE is the ideal foundation for agentic identity since it provides unique, cryptographically verifiable identities to workloads. This allows agents to be reliably identified based on what they are and where they are running, rather than trusting the agent with an API key or some other secret identifier and hoping the agent isn’t tricked into sharing that secret via prompt injection or some other nefarious means.

While SPIFFE is the open standard for secure workload identity systems, SPIRE is the production-ready reference implementation of the SPIFFE standard. It consists of a simple server and (non-AI) agent architecture which can scale across hybrid infrastructure, from Kubernetes clusters, to cloud-provider VMs, to bare-metal servers and edge computing devices.

When integrated into existing enterprise environments, SPIFFE and SPIRE enable workloads (AI or otherwise) to operate without long-lived static credentials, such as API keys or service account tokens, and, thanks to open standards, allow external systems including major cloud providers or LLM inference APIs to trust SPIFFE-backed identities using workload identity federation and other similar techniques.

SPIFFE and SPIRE are both graduated projects of the CNCF and provide the perfect substrate for robust AI agent identity management. For more information on Cofide's approach to workload identity, see Introducing Cofide Connect.

Agentic authority

Having established a robust identity management layer for AI agents using SPIFFE and SPIRE, the next major challenge for an enterprise is deciding what each agent is and isn’t authorized to do. While it may be tempting to treat agents like employees — granting broad access and trusting them to act appropriately — this approach lacks the auditability, enforceability and least-privilege rigour that enterprise security demands. However, the OAuth 2.0 framework, which is the dominant industry standard for system to system authorization, offers a solid foundation for a secure AI agent authorization system.

One of the most important aspects of agentic authorization is that agents should not be allowed to impersonate a human user, since they act as independent principals in their own right. Instead, an agent authorization system must capture the fact that human users are delegating work to the agents, whilst maintaining cryptographic proof of the originating human's identity and authorization decision. This distinction is what enables robust audit trails and flexible, identity-aware authorization policies.

Existing OAuth standards already incorporate the concept of delegation. In particular, the OAuth 2.0 Token Exchange specification includes provisions for distinguishing between who is granting authorization and who (or what) is using that grant to access an OAuth protected resource. This model maps neatly onto the ‘user delegating work to an AI agent’ scenario that is prevalent in modern agentic AI systems.

In a series of future blog posts, we’ll share more details about how Cofide are combining open standards, such as SPIFFE and OAuth, with capabilities including token exchange, to enable secure, auditable and scalable deployments of AI agents in enterprise scenarios, including:

- End-to-end agentic AI workflows without any long-lived secrets or API keys - an agent can’t leak secrets that it doesn’t have!

- Dynamic, policy-driven token exchange which is transparent to applications

- Scalable policy management with robust versioning and auditing capabilities

- Seamless integration between AI and non-AI workloads backed by a unified identity platform

- Cross-trust-domain federation with legacy systems

Building on the foundations of open standards

While many companies are promoting proprietary approaches for solving the identity and authorization problems associated with enterprise agentic AI use cases, at Cofide we’re building on the hard-won acceptance of open standards, including SPIFFE and OAuth.

Not only does this maximise compatibility between greenfield AI agents and ‘legacy’ non-AI workloads, it also helps an enterprise to future-proof against unforeseen business events or changes in direction from companies with proprietary offerings.

If you would like to learn more how Cofide can help to secure your AI agents and provide a secure platform for enterprise workload identity, please get in touch.